Update, March 27, 2020: Thanks to COVID-19 and social distancing, Zoom is surging in popularity at the moment. There are hidden dangers here.

Zoom may have fixed the specific vulnerability discussed in the original post below, but it still violates user privacy in a number of deeply troubling ways, including some that quietly enable surveillance. For example, it apparently allows administrators to listen to calls recorded by other users; during meetings, it lets administrators track other participants’ activity and whether they’re paying attention. Some organizations, notably including the British Ministry of Defence, are banning Zoom’s use.

Please see this excellent article from the Electronic Frontier Foundation for more information on the impact of Zoom and other remote working tools on your privacy.

Original post date: 07-17-2019

Anyone paying attention to the tech press lately may have witnessed the recent kerfuffle involving a security problem with Zoom, a popular video-conferencing software package used by many companies, including Cantina. The flaw, which lies in the macOS version of the Zoom client, and the company’s handling of its discovery provide a master class in how not to think about the privacy and security of your users.

If you missed the fuss, Zoom has a convenient feature where someone can send a web link that goes to a particular online meeting. If a user receives such a link and clicks it, their web browser will magically open the Zoom software (assuming it’s installed) and join the meeting.

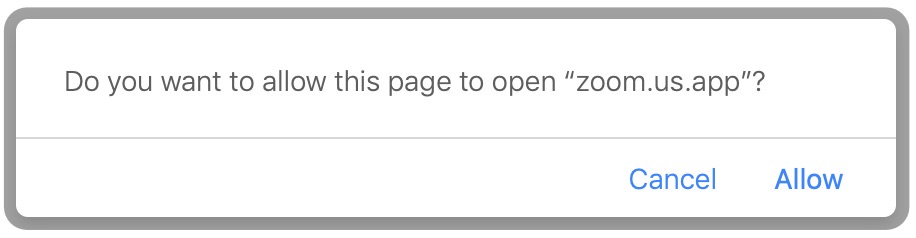

It happens seamlessly—at least, it used to. Apple introduced a new security measure in recent versions of its Safari browser, in the form of a warning that the browser is about to launch another application, which the user must allow or deny.

For Zoom, that meant that the user, having already clicked on a meeting link, would have to click a second time to approve the launch. In Zoom’s eyes, as they explained later, that extra click was unacceptable; it added too much “friction” to the act of joining a meeting.

To get around this situation, Zoom went to considerable lengths. They modified their installer to also install a secret helper program that would run on the user’s computer, all the time. When the user would click on a meeting link, the browser would take them to Zoom’s website, which would use the browser to send a signal to this secret program, which in turn would launch Zoom into the meeting. This circuitous arrangement meant that the launch request wouldn’t be coming directly from the browser anymore, and so the confirmation prompt wouldn’t appear. Once more, the user would get into the meeting with only a single click.

That’s pretty convenient, so what’s the problem?

Well, it turns out that any web page a user might visit would be able to signal that secret program and forcibly join a Zoom meeting on the user’s computer. It could add the user to a meeting they didn’t want to be in. It could join a thousand meetings at once and lock up the computer. Worse, if users haven’t changed Zoom’s default settings, the service could join a meeting with the camera activated, without warning and without giving a chance to stop it. (There’s more; see the original report if you’re curious.)

In its handling of this feature and the resulting criticism, Zoom made an impressive number of missteps, all avoidable. Here are some of them:

Using a howitzer to kill a fly: The secret program Zoom installed was not the tiny helper one might expect. In fact, it was a fully fledged web server, capable of serving up web sites and images and more. Again, it was accessible to any other program running on the computer, and all that was done just to avoid a single click. The disproportion between the perceived problem and the workaround—its Rube Goldberginess—should have raised questions about whether this was a wise move.

Circumventing security: Zoom seems to have thought of the extra click only as an irritant getting in the way of their seamless launch. But it actually had a clear and important purpose as a security mechanism, a way to prevent malicious web sites from launching other programs without the user’s knowledge. To get around it, Zoom did the equivalent of making a hole through the wall of your house because it’s too much trouble to unlock the front door all the time. Their workaround, though creative, was expressly designed to undercut Apple’s efforts to protect the user. It’s probably for this reason that Apple, once the vulnerability became public, took the highly unusual step of updating macOS specifically to disable Zoom’s web server. (As this post was going to press, Apple extended this fix to cover other videoconferencing applications that use Zoom’s software.)

Keeping the user in the dark: Nothing in the Zoom installation indicated that it would install and run a web server on the side. It certainly didn’t give the user a chance to opt out. Worse, even if you uninstalled Zoom’s software, the web server remained behind and continued running (for a terrible reason that we will discuss in a moment). Zoom had made a questionable and self-serving risk/benefit analysis, concluding that the small user interface improvement of saving that extra click was worth evading platform security, and they took that decision out of the hands of the users who should have been making the choice.

Treating the user’s computer like their own: By silently installing this web server for their own purposes—not the user’s—Zoom was acting as if the computer was their own property, taking control and safety away from the actual owner. Nowhere is this clearer than in the fact that if a user deleted Zoom from their computer and then, later, clicked on a meeting link, the web server would silently download and reinstall Zoom in order to join the meeting. Clearly, the one and only goal Zoom was pursuing here was to make their software as foolproof to launch as possible, no matter what the cost, even if that meant undoing the user’s own express actions.

Ignoring the problem: When engineer Jonathan Leitschuh discovered the vulnerability and reported it to Zoom, giving them the conventional 90-day head start on a fix, their response was to do almost nothing. From their point of view, that was an entirely understandable decision. After all, they’d already made the decision that the security tradeoff was worth it, and all that had changed was that it wasn’t secret anymore. Apparently, even Leitschuh’s perspective didn’t lead them to reconsider how people might react to the discovery that someone was running a secret server on their laptops. Zoom did make the vulnerability slightly harder for hostile web sites to exploit, but Leitschuh had already demonstrated that getting around these restrictions was possible, so these updates were not much of an improvement.

Downplaying the significance: When the 90 days expired and Leitschuh went public, Zoom’s response, incredibly, was to stick to their guns and take their case to the public at large. “The local web server enables users to avoid this extra click before joining every meeting,” they explained in a statement. “We feel that this is a legitimate solution to a poor user experience problem, enabling our users to have faster, one-click-to-join meetings.” This was, predictably, a PR disaster. Users were understandably unimpressed by the argument that Zoom had installed insecure software on their machines without their knowledge for their own paltry benefit, and, inevitably, within 48 hours, Zoom was forced to backpedal and remove the web server from their product.

Blaming the users: The most alarming of the security risks was the capability of forcing users into meetings with their camera on, turning their Zoom installation into a potential spying tool. It was true, as Zoom wrote in their official statement, that “users can configure their client video settings to turn OFF video when joining a meeting.” But that required the users to know about that setting and take specific action to change it. Making a system unsafe by default and blaming users for failing to reconfigure it is a classic mistake that nobody should still be making in 2019.

The bottom line here is that these unwise design decisions were made to benefit Zoom, not their customers. The saved click is nice, but no end users needed it so badly that it was worth such drastic and unsafe measures. Nor was it worth undermining Apple’s attempts to protect the users. But this way, Zoom was able to keep touting their unparalleled ease of use. They had protected what they evidently saw as one of their crown jewels, but at the price of compromising their users’ security and privacy, about which they showed far too little concern.

One might also argue that they had assigned too much weight to that single-click launch as a market differentiator, as opposed to other trivial features like reliability, well-designed UI, audio and video quality, and so on. Better if they had considered the impact of preserving that feature at all costs and reluctantly given it up—that is, after all, what they had to do anyway, in the end. Balancing safety with convenience always leads to tradeoffs. Another option might be to give the user an informed choice, although explaining the situation would be a challenge in this case. (“Are you willing to click on a confirmation every time you launch a meeting, or would you rather live with the small risk that someone will activate your camera without your knowledge?”)

Unfortunately, by making this misjudgment and sticking with it too long, Zoom caused real, and possibly permanent, damage to their brand. Overnight, their product became associated with a disregard for security and neglect of their users’ privacy. Time will tell whether they recover and whether the self-inflicted damage compares favorably to the harm they expected from that extra click. As it stands, there’s every reason to ask whether you want a company with such a demonstrated lack of concern for safety to be installing software of any kind on your laptop.

The time when security and privacy could be treated as an afterthought has ended. Threats and breaches are in the news on a daily basis. We have to anticipate hostile users and prioritize security and privacy at every level of our products, from design through all phases of development. (It’s worth noting that in Zoom’s case the vulnerability arose through feature design, not through bugs in the software.) Protecting users is not just good for users—it’s good business for you. Don’t be Zoom.