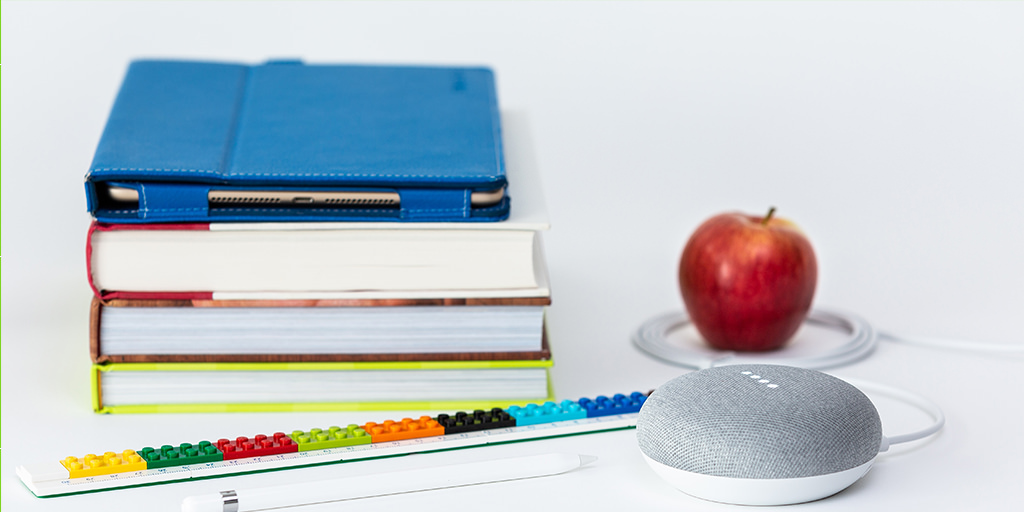

Voice Landscape

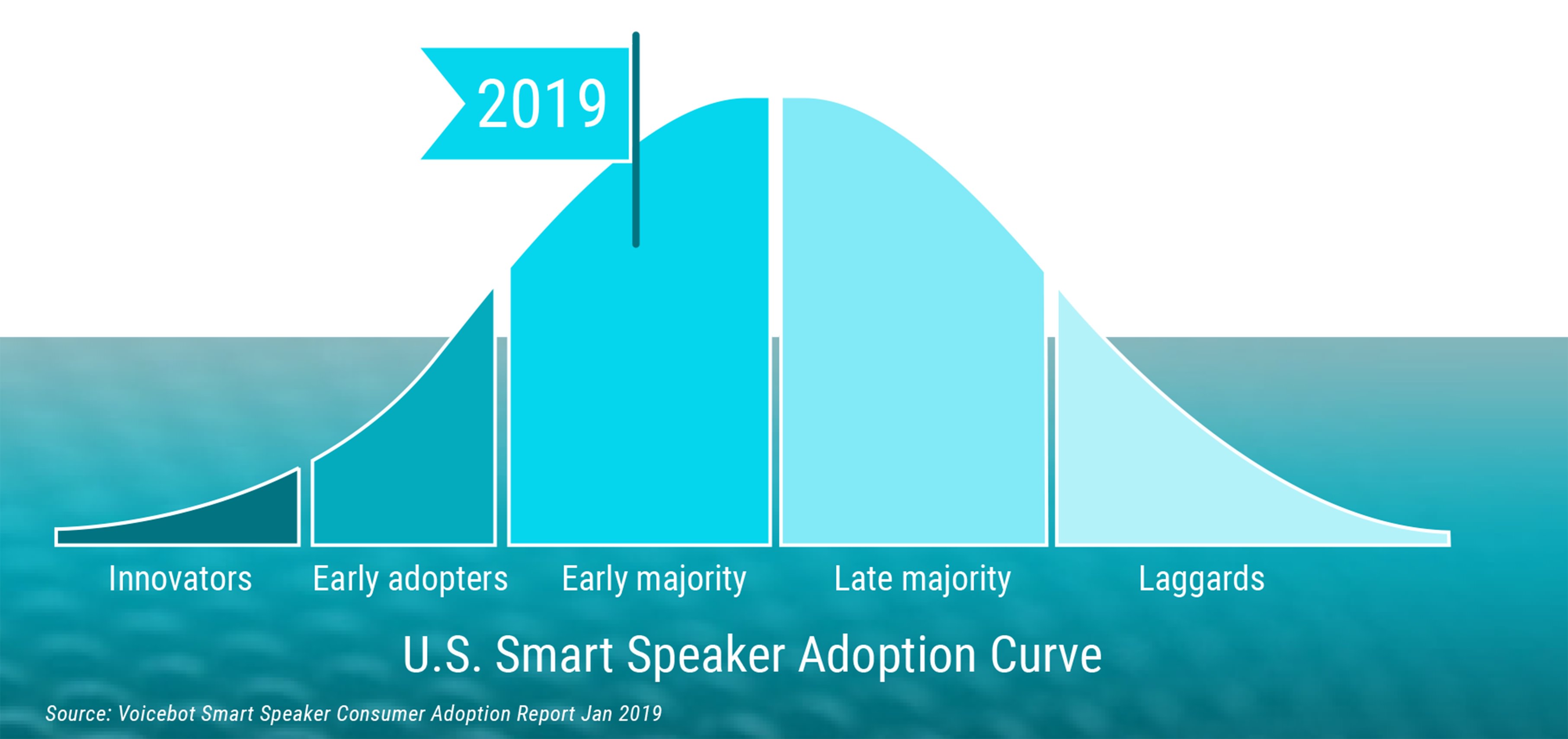

Smart speakers and voice assistants are markedly paving a new way for people to interact with technology. These innovative applications continue to rise in popularity and move along the innovation curve: from primarily appealing to early adopters to captivating mainstream consumers with everyday use.

From the same report as the image above, we see that more than 70% of smartphone users have tapped into voice assistant technology on their devices at least once. Additionally, “Of all voice assistant users on smartphones, 27.6% report being daily users. Among smart speaker owners, that figure rises to 39.8%.” Customers are curious enough about the technology to try it out, and many of them subsequently pursue habitual interactions with their preferred assistants.

Despite the productization of these applications, it’s critical to remember that the experience matters. It’s not enough to implement voice technology without providing real customer value—solving a problem—and actually being able to sustain a fluid, actionable conversation with a human user. So, as is core to developing any product, it’s extremely important when embarking on the journey of building a voice application to start with a clear problem to solve, craft a product vision, and test the idea within the context of the problem before diving right into implementation. Of note, only one of the tools described here, Botsociety, has built-in user testing capabilities, though this functionality leaves a lot to be desired.

With the rapidly-expanding, and therefore ever-shifting, voice landscape, here’s a deep-dive review of four prototyping tools currently available—looking at the free versions only—to help with conceptualizing and defining conversational experiences: Botsociety, Voiceflow, VUIX, and Tortu.

Botsociety:

Botsociety positions itself as an app that enables you to visually prototype chatbot and voice assistant conversations, targeting a wider range of platforms than Amazon Alexa and Google Assistant, including: Whatsapp, Messenger, Slack, Hangouts, Twitter Bot, or your custom chatbot. A key differentiator is that you start by creating system and user dialog interactions in a visual device interface, and Botsociety builds an easy-to-export conversational flow diagram, with all branching variations, as you go.

Rating: 4/5

|

|

Pros:

- Build integrations: Dialogflow, Rasa.ai, custom APIs for your codebase

- Import format: CSV

- Export formats: Video, PDF, CSV, GIF

- Unlimited real-time team collaboration, with commenting capabilities

- Templates with example flows, unique per chosen bot platform, available

- Specify variable names, values, and (customizable) types for build integrations

- Tracks revision history

- Has built-in user testing capabilities—This was tricky to understand in preview mode, so likely involves further investigation into your specific needs before committing to it.

Cons:

- No live publish capabilities

- Each flow path is exported separately, one-by-one—This might be a limitation for the free version.

- Some iconography is unclear until you start playing around with the actions

- Possible but not obvious drag-and-drop conversation reordering

Pricing tiers:

- Free

- Professional level ($79/month/member)

- Enterprise level

Items to explore in future:

- Variable definition and how this syncs with Dialogflow

- Prototype-to-build integrations

- Usability testing

Summary:

Overall, there’s a very small learning curve (<30 minutes) to get a demo conversation up and running—technical expertise is definitely not required—and bring an abstract voice experience concept into an interactive, realistic state to engage with and refine. The visual interface makes it easy to understand if you’re defining a system vs. human response, as well as path divergences as you consider edge case scenarios and proceed through the conversation. It’s quick and simple to preview an entire flow, then export a system-generated map of the conversation without any additional overhead.

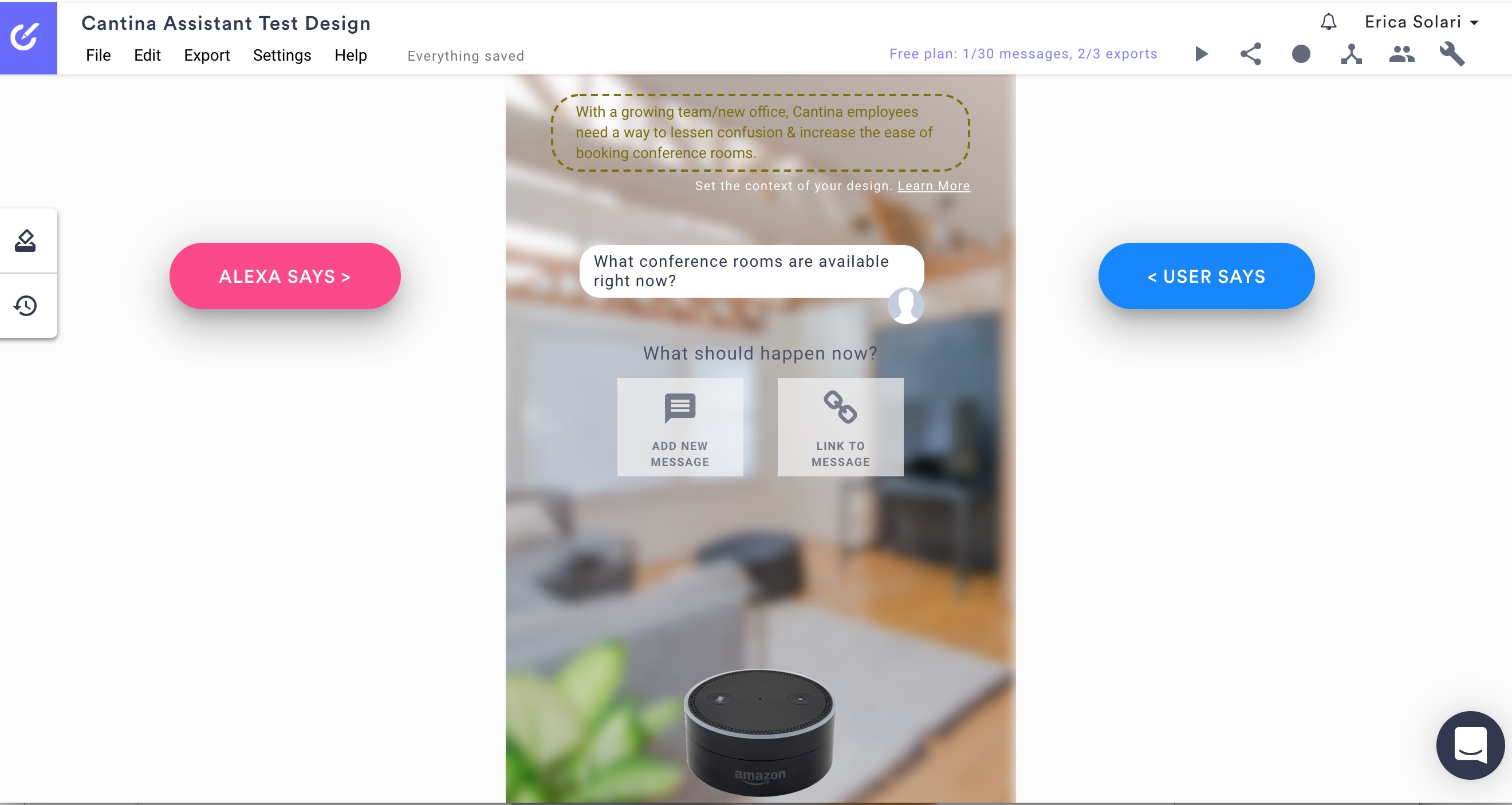

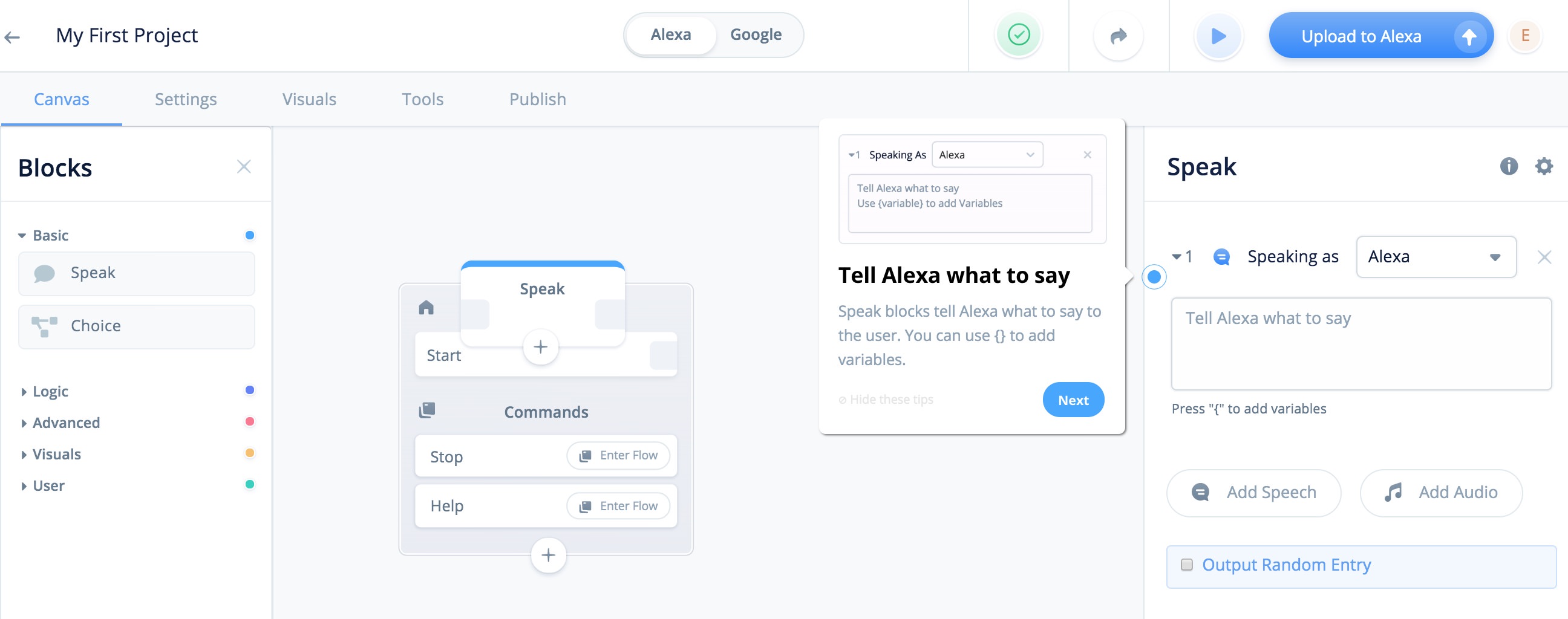

Voiceflow

Voiceflow is a tool that allows you to prototype and subsequently publish Amazon and Google voice applications. Though marketed as a no-coding-required platform, specifying some of the conditional response logic—which does require technical know-how—is certainly beneficial to configure the app in a useful way to achieve a successful live rollout. Voiceflow’s key differentiator is that it’s a “LEGO-style block system” whose goal is to let you expand your flow by selecting from the various components available.

Rating - 2/5

Pros

- Build integrations: Amazon, Google (beta), external APIs

- Upon first log-in, orientation video and overlay tour get you started

- Real-time team collaboration, with commenting capabilities

- Templates with example flows are available, with previews

- Preview the display for multimodal Amazon devices through the Alexa Developer Console before publishing your skill

- Has built-in tools for published skills: product monetization, basic analytics

Cons

- No import or export functionality

- Limited number of team collaborators, depending on payment tier

- Doesn’t track revision history

- No built-in user testing capabilities

- Some icon/action descriptions only make sense if you have technical knowledge—unclear UX that’s tough to learn

- Flow components are for system prompts, not user inputs—You can add user interactions, intents, choices, but it’s hard to understand what info is required and how to build it into the flow.

- Adding displays for multimodal devices requires you to go through the Alexa Developer Console, export code, and then upload it back to Voiceflow, losing styling and formatting elements in the process

- Preview mode is a generic chat window that only allows you to enter text responses and only has audio for .wav sound effects, unless you manually add system audio files

Pricing tiers

- Free 1-month trial

- Professional level ($29/month/seat)

- Business level ($99/month/seat)

Items to explore in future

- User interaction and intents inclusion

- Prototype-to-build integrations and publishing

Summary

There’s a pretty steep learning curve to use this app to create a unique conversation flow, though the Voiceflow team does make an effort to provide guiding resources for first-time users. Reviewing the templates helped with understanding ideal product usage patterns, but it was very frustrating to outline a dialogue flow from scratch—demo attempt was abandoned after several hours spent in vain—let alone get it far enough along to evaluate the quality of the experience. Having at least baseline technical knowledge and terminology may go a long way toward making this app more approachable and, ultimately, useful for prototyping.

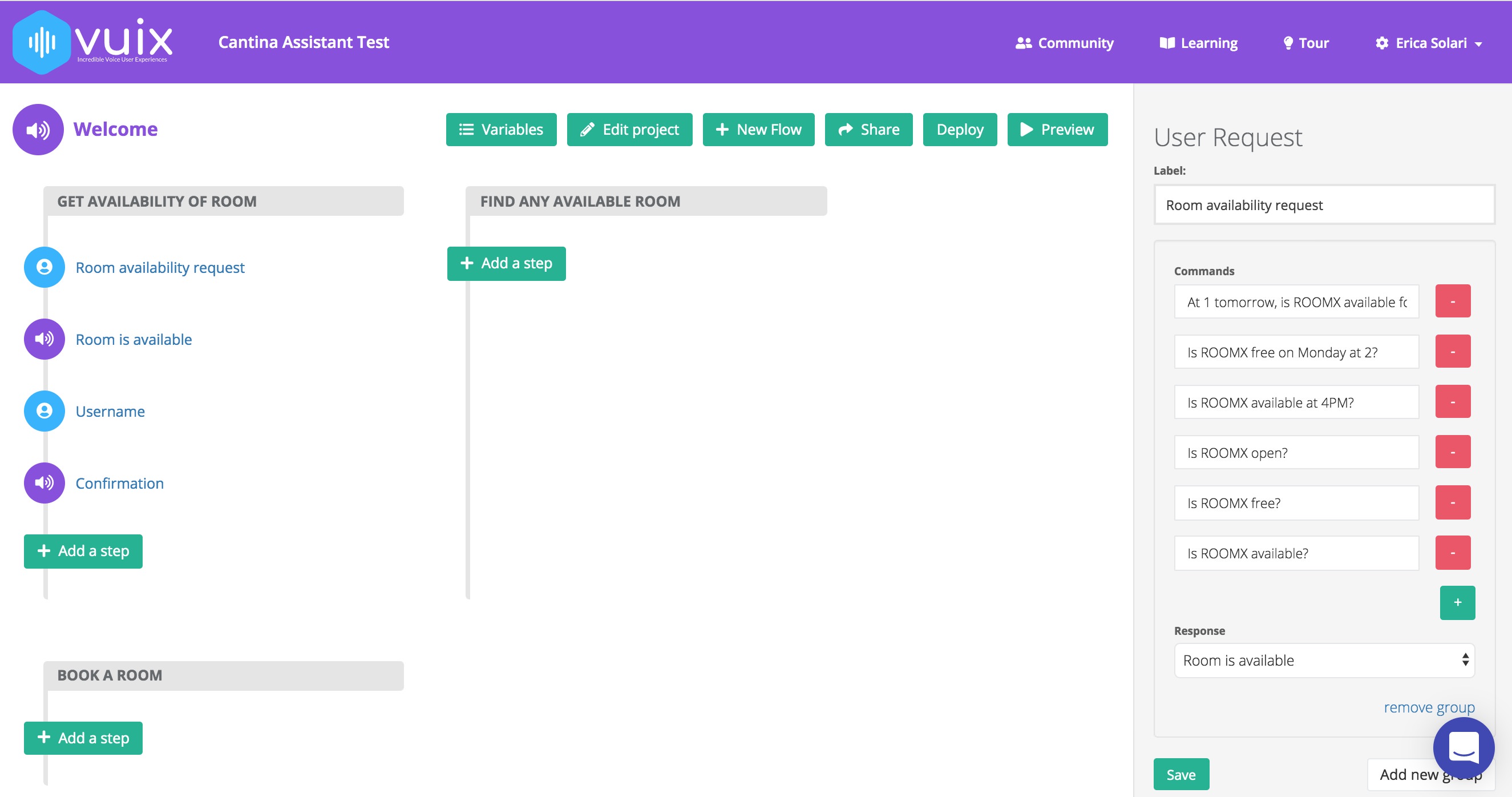

VUIX (beta version)

The objective of VUIX is to assist with the development of interactive voice prototypes that can be quickly previewed and deployed to Amazon Alexa and Google Assistant. Its key differentiator is taking user intents into consideration to guide flow development, meaning: it starts with the end-user goal or objective to frame the context of each conversational flow path.

Rating - 3/5

Pros

- Build integrations: officially coming soon, but you can deploy to Amazon, Google

- Overlay tour is available upon first log-in, though it has a few major bugs

- Preview mode mimics true system-human conversation: system plays audio prompts and user replies aloud

- Templates are available to reference

- Specifying internal variables is straightforward—Figuring out how to integrate them in the flow takes more effort, as may be true of the Amazon variables option

- Clear drag-and-drop conversation reordering

Cons

- Each flow is a standalone, linear path, rather than encompassing all response branches

- No real-time team collaboration or commenting capabilities—link share only

- No import or export functionality

- Doesn’t track revision history

- No built-in user testing capabilities

- Template preview is not available prior to selection

- Noticeable spelling errors and grammar inconsistencies

- It’s unclear what information some fields (i.e. groups, commands) require and how they impact the flow

Pricing

- All beta functionality is free—upgrade options are coming soon

Items to explore in future

- Prototype deploy process

- Post-beta settings, integrations, upgrade features, as well as pricing models

Summary

With a fairly easy learning curve to generate and refine a demo conversation flow path (<30 minutes), VUIX sticks to its claims of maintaining a voice-first outlook—as evidenced in its voice-only preview mode—and not requiring customers to have a technical background to successfully create interactive prototypes. However, finding a better way to capture conversational variants or alternative response paths would be a helpful addition. It will be worth reviewing the post-beta capabilities to see how the team can further differentiate this product and take it to the next level.

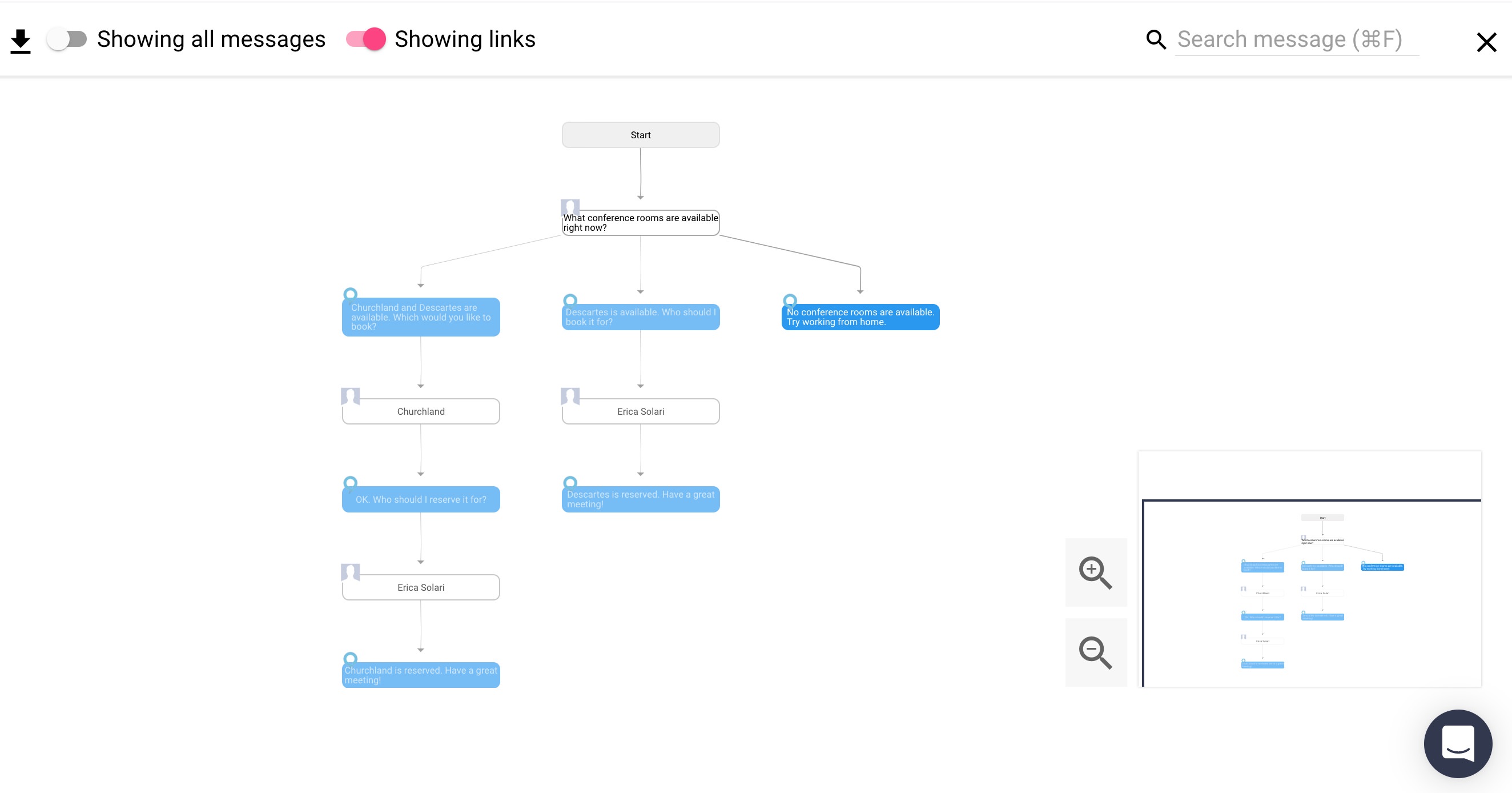

Tortu (beta version)

Tortu’s value proposition is enabling users to seamlessly write and visualize conversations for Amazon Alexa, Google Assistant, any chatbots, and Interactive Voice Responses (IVRs). Through system-generated flowchart diagrams and interactive prototyping capabilities, customers can directly engage with, test, and ultimately enhance their voice experiences. Its key differentiator is the ever-expanding flowchart functionality: as you add steps and connect them back to the conversation paths, the chart rearranges itself so the flow is clear for all variations.

Rating - 4/5

Pros

- Import format: JSON

- Export formats: JSON, PDF or PNG flow snapshots—bonus points for file download names cleanly matching the flow names

- Onboarding and general help tips—Upon first log-in, there’s a “quest” that guides you through a quick series of actionable steps to introduce you to the product.

- View alternate response paths all together

- Comments allowed

- Prototype preview mode mimics true system-human conversation: system plays audio prompts and user has option to reply aloud or by text chat

- Templates are available to reference

- Specifying and integrating variables (“slots”) is straightforward

Cons

- Can get lost on the flow canvas

- No real-time team collaboration—link share only

- Doesn’t track revision history

- No built-in user testing capabilities

- No deploy integrations

- Noticeable spelling errors and grammar inconsistencies

Pricing

- All beta functionality is free—tiered options are coming soon

Items to explore in future

- Streamlining slot incorporation into utterance examples

- Post-beta settings and pricing model

- Tortu X (alpha version available as of 7/11/19 for early users)—self-described as a new VUI tool that allows you to “design with ‘dialog turns’ in a canvas-like workspace.”

Summary

It took very little time to spin up a demo flow using Tortu (<30 minutes with adding slots): the learning curve is small and the platform’s help tips and guides are very useful. Tortu’s “About us” section on its website stresses its mission of enabling people to speak to devices the same way they would other humans, and it’s clear from the highly interactive chat prototype that they are on the path to achieving that goal. The flow canvas takes some getting used to, but ultimately, it’s quick and easy to preview the flow branches, then export the system-generated conversation map. This is another tool to watch for in post-beta, to see how the team augments its already-engaging experience.

Conclusion

The voice landscape is rapidly evolving, and as with so many products today, differentiation is based on the quality of the customer’s experience. The tools outlined here are meant to assist with conceptualizing and building better voice experiences. Overall, Botsociety and Tortu are probably the best of these options for defining, testing, and iterating on cohesive conversations, including all the alternate paths and interactive formats—voice, text, or a combination of voice and text—available to users for a variety of chatbots and voice assistants. VUIX is a good option for detailing linear dialogue scenarios, primarily for Alexa and Google applications. Voiceflow requires more advanced technical know-how and is more heavily focused on deploying to Amazon and Google than the crafting of an interactive experience.

Between drafting a list of tools to review last fall and actually getting around to comparing them with this post, at least three others were deprecated or acquired; we’re eagerly awaiting one of these—Sayspring, integrated within Adobe XD—to approve our request for trial access. As voice applications continue to grow and change, the tools used to bring them to life shift just as quickly. Before beginning your prototype, it’s beneficial to evaluate your team’s goals and make sure a platform’s capabilities align with your needs and expectations for creating and testing experience-driven conversations.

Using another tool? We’d love to hear your opinions or work with you to make your conversational experience a reality!

The views and opinions expressed in this blog are those of the author(s) and do not reflect the official policy or positions of Cantina or any other agency, organization, or third party. The author has no current affiliation with any of the above vendors.